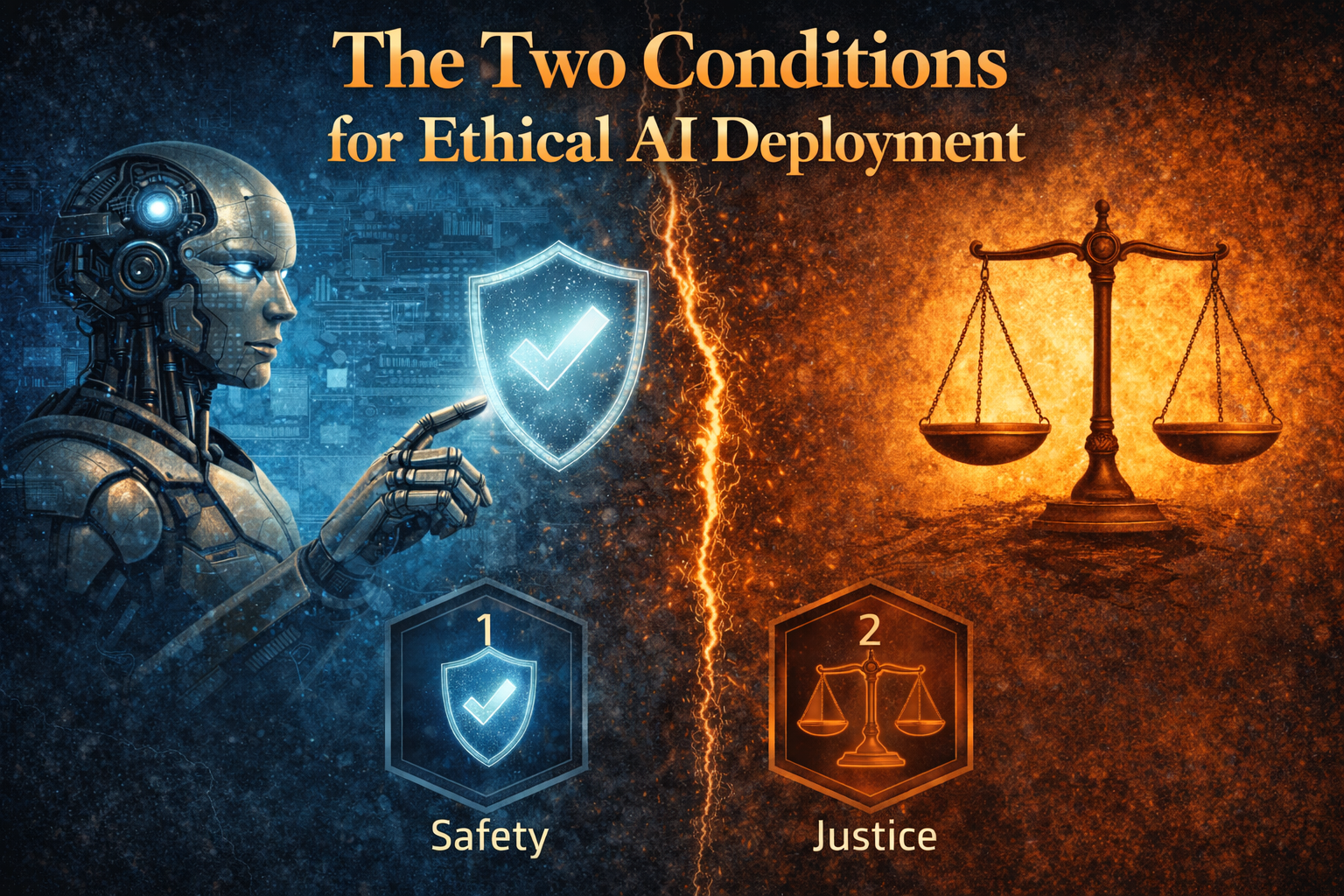

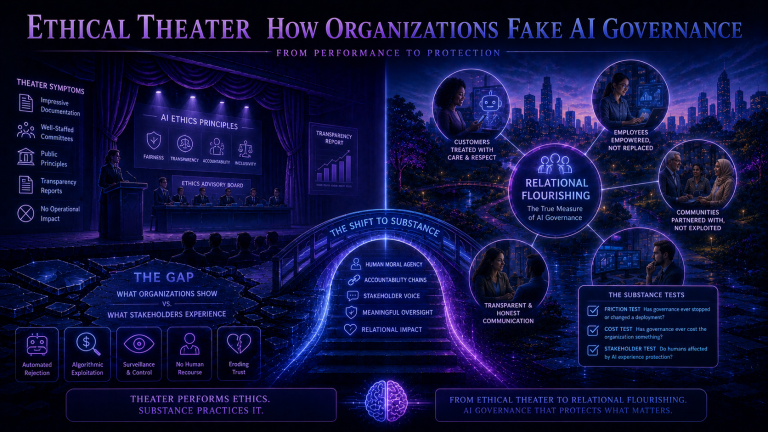

Throughout this series, we have established foundational principles for AI governance. AI lacks moral agency and always will. The governance question concerns how humans exercise moral agency through AI systems, not how to control AI behavior. The critical trigger is when AI shifts from tool to role. The Vacancy Problem emerges when AI fills positions without being capable of what they require. The Derivative Principle tells us that ethical assessment must be directional, measuring whether AI deployment moves relationships toward flourishing or away from it.

These principles now converge into specific requirements. For AI deployment to qualify as ethical under this framework, two conditions must be satisfied. Both conditions are necessary, and neither alone is sufficient. Organizations that satisfy one while failing the other are not doing ethics partially; they are failing at different points in what must be a complete system. Understanding why both conditions matter, and why they must operate together, is essential for governance professionals seeking authentic ethical practice.

Condition One: Structural Accountability

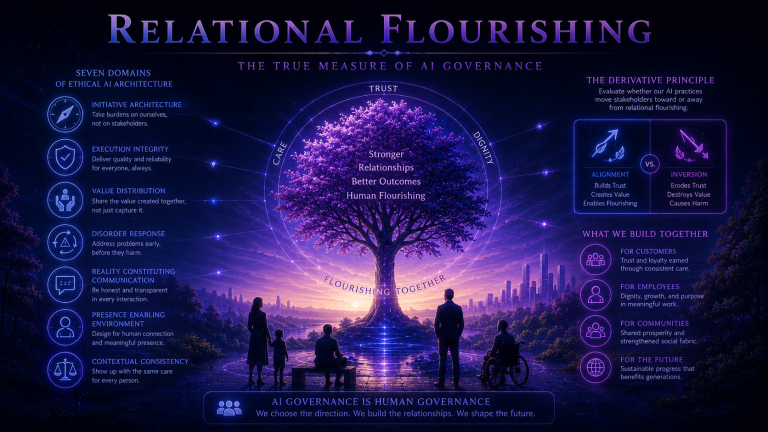

Structural accountability means that AI roles exist within organizational systems where human moral agency remains present, active, and responsible. This is more demanding than it might appear. It is not sufficient that humans designed the AI system at some point in the past. It is not sufficient that humans could theoretically intervene in AI operations if they chose to. It is not sufficient that organizational charts identify someone nominally accountable. Structural accountability requires that humans actually exercise moral judgment over AI outcomes as part of the system’s normal operation.

Structural accountability has several components. Design accountability means that humans with moral agency designed the AI role with clear understanding of what the role involves and what moral obligations it carries. Operational accountability means that humans exercise ongoing judgment over AI operations, not merely monitoring technical performance but assessing whether the AI role serves its relational purposes and whether specific cases require human intervention. Outcome accountability means that humans accept genuine responsibility for the outcomes AI produces. When AI systems cause harm, identifiable humans acknowledge that harm and bear consequences for failures.

Structural accountability also requires intervention capacity: the practical ability for humans to intervene when moral judgment requires it. This capacity is undermined when AI systems operate at scales or speeds that make human oversight impossible, when organizational structures insulate AI operations from human review, or when system complexity prevents humans from understanding outputs. It requires access for affected parties: humans affected by AI can reach human moral agents who can address their concerns. If customers cannot reach humans or employees cannot appeal to humans, then AI has effectively replaced moral agency rather than operating subordinate to it.

Condition Two: Directional Alignment

Structural accountability ensures that humans remain in the loop. Directional alignment ensures that the loop is pointed in the right direction. An organization can satisfy structural accountability completely while failing directional alignment if the accountable humans have aimed the system at relational destruction rather than flourishing. The distinction is critical: organizations can maintain perfect accountability over systems designed to harm.

Directional alignment asks: Is this AI role designed to build relational value or to extract, exploit, or destroy it? Consider three organizations with AI-powered customer service systems. All three maintain structural accountability with human designers, operators, and oversight.

The first organization designed its system to help customers solve problems efficiently. AI handles straightforward issues quickly while complex issues route to humans promptly. Success is measured by problem resolution and satisfaction. This organization demonstrates directional alignment. The system aims at serving customer needs and building trust through effective assistance.

The second organization designed its system to deflect customer contacts because humans are expensive. Success is measured by deflection rate and cost per contact. This organization fails directional alignment despite maintaining structural accountability. The system aims at avoiding relationship with customers who need help, at degrading relational value in service of operational efficiency.

The third organization designed its system to extract additional purchases. AI engages customers seeking sales opportunities. Success is measured by attachment rate and revenue per contact. This organization also fails directional alignment. The system aims at exploiting customer relationships for extraction, treating customer needs as opportunities for organizational gain.

Why Both Conditions Are Necessary

Organizations satisfying the first condition but failing the second have accountability without alignment. Humans are in the loop, but the loop is pointed toward harm. Every inverting outcome was chosen by humans who could have chosen otherwise. The accountability structures document these choices clearly. But the decisions moved relationships toward degradation rather than flourishing. Such organizations are not ethically neutral. They are accountably harmful.

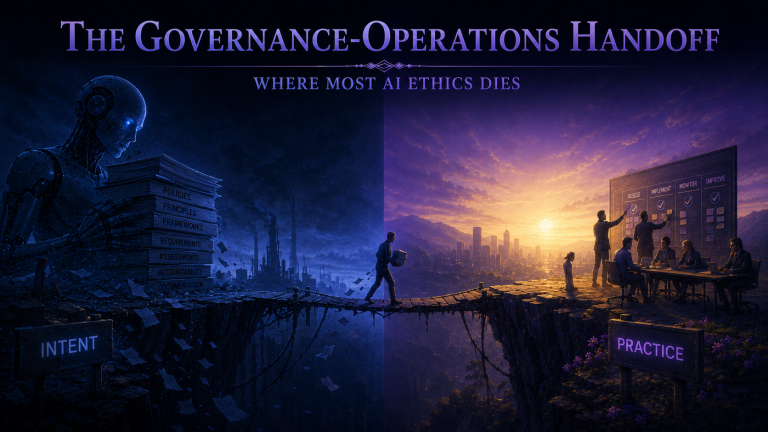

Organizations attempting the second condition without the first have aspirations without structure. They may intend for AI to serve stakeholder flourishing. Mission statements may articulate noble purposes. But without accountability structures ensuring that humans actually exercise moral judgment over outcomes, good intentions cannot translate into reliable practice. AI will optimize for whatever parameters humans set, and without structural accountability, those parameters may drift toward organizational benefit at stakeholder expense regardless of stated values. No organization can achieve directional alignment without structural accountability because alignment requires ongoing human judgment that only accountability structures can ensure.

The two conditions work together as a complete system. Structural accountability ensures that moral agency is present. Directional alignment ensures that moral agency is exercised well. Neither alone accomplishes what ethics requires. Together, they specify what ethical AI deployment looks like in operational terms.

Implementation Implications

For governance officers, the Two Conditions framework provides clear policy requirements. Every AI deployment at role level must demonstrate structural accountability: documented chains of responsibility, designed intervention mechanisms, accessible human touchpoints. Every deployment must demonstrate directional alignment: purpose oriented toward stakeholder flourishing, metrics measuring relationship quality. Governance review must evaluate both conditions independently, recognizing that satisfying one does not indicate satisfying the other.

For management regulators, the framework provides operational requirements. Structural accountability requires building intervention capabilities that actually function under operational conditions, maintaining human touchpoints with genuine authority to exercise judgment. Directional alignment requires monitoring whether AI deployment is strengthening or weakening stakeholder relationships, prioritizing relational outcomes alongside efficiency outcomes.

For assurance auditors, the framework provides assessment criteria. Auditors evaluate whether structural accountability exists operationally, not merely on paper. They assess whether humans actually exercise moral judgment over AI outcomes. They evaluate whether directional alignment is maintained by examining stakeholder experience, relationship metrics, and outcome patterns. Assessment that confirms both conditions provides meaningful assurance. Assessment revealing gaps identifies genuine governance failures.

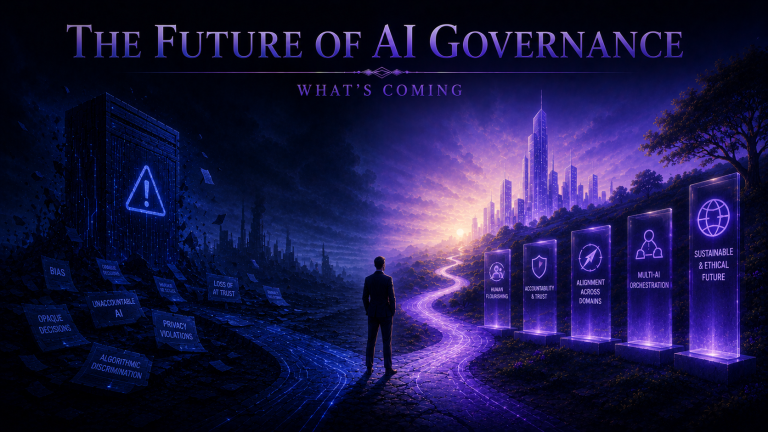

The next post in this series will examine the Daisy Chain Principle, which addresses how accountability must be maintained through complex AI architectures where multiple systems interact. The Two Conditions apply at every point in such chains, and the Daisy Chain Principle ensures that complexity does not become a means of escaping the accountability that ethical AI deployment requires.