Modern AI deployments increasingly involve chains. A job applicant submits a resume. An AI system parses the document and extracts structured data. That data flows to an AI screening system that evaluates qualifications against job requirements. The screening output feeds an AI ranking system that positions the candidate against others. The ranking flows to an AI scheduling system that either advances the candidate to interview or generates a rejection notification. Each link in this chain operates autonomously within its parameters. No single human reviews every transition. The chain may extend through many links before any human encounters an output.

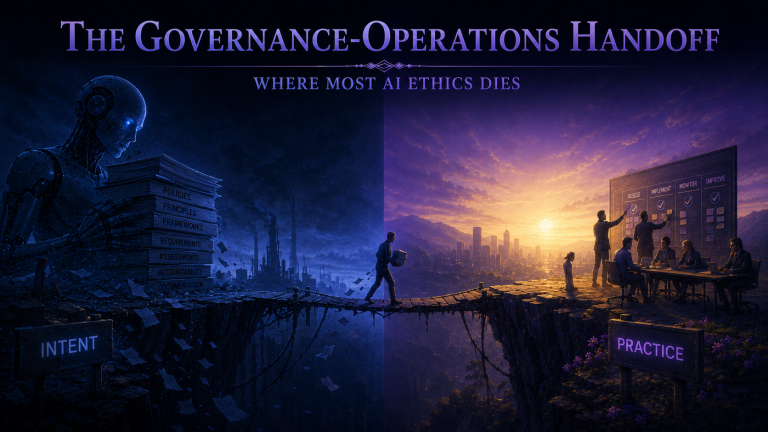

In previous posts, we established that AI lacks moral agency and that ethical AI deployment requires both structural accountability and directional alignment. The Daisy Chain Principle extends these requirements to complex architectures where AI coordinates other AI, where one system’s outputs become another’s inputs, where decisions propagate through networks before humans encounter results. The principle is uncompromising: any chain of AI roles must connect to human moral agency to be ethically legitimate. Accountability cannot diffuse into complexity, cannot be disclaimed by pointing to autonomous systems, cannot be escaped by creating sufficiently sophisticated automation.

The Accountability Void

When AI chains execute consequential decisions affecting people’s lives without human moral judgment mediating those decisions, the chain ends in what we might call an accountability void. Consider the job applicant whose resume was parsed incorrectly by the first AI in the chain. The parsing error propagated through screening, ranking, and scheduling systems. The applicant received an automated rejection. No human ever saw the application. No human judged that this particular candidate should be rejected. The outcome emerged from a system in which no one exercised moral judgment about this individual’s circumstances.

The Daisy Chain Principle holds that this architecture is ethically illegitimate regardless of how well each individual AI system performs. The problem is not that any particular link in the chain made an error. The problem is that the chain was designed to produce outcomes affecting humans without moral judgment mediating those outcomes. When the chain ends in automatic impact on humans, it ends in a void. The applicant at the receiving end encounters consequences for their life that emerged from a system where no one was accountable for their specific case.

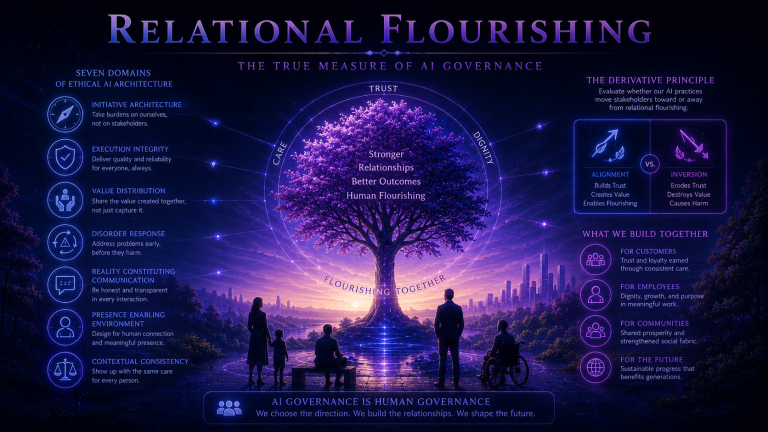

Organizations frequently attempt to escape accountability through the algorithm defense. When automated systems produce harmful outcomes, organizations claim that no human decided, that the outcome emerged from algorithmic processing, that responsibility diffuses into the complexity of the system. The Daisy Chain Principle rejects this defense categorically. When organizations design chains of AI decision-making, they choose to create systems in which outcomes will emerge without moral judgment. That choice is theirs. The humans who made architectural decisions, who established optimization parameters, who determined what oversight would or would not exist: those humans are accountable for the outcomes the system produces.

Backward and Forward Accountability

The Daisy Chain Principle distinguishes two types of accountability that must both be present. Backward accountability traces from outcomes through the chain to the humans who designed and architected it. These humans made choices about what systems to link, what parameters to set, what optimization targets to establish, what human oversight points to include or exclude. They chose to create a system in which no one would exercise moral judgment about individual cases. They are accountable for that architectural choice and for the consequences it produces. Their accountability does not dissolve because the system operates autonomously.

Forward accountability traces from current operation to the humans who maintain authority to intervene in the chain’s operation. These are the humans who can modify chain behavior, halt operation when it produces unacceptable outcomes, or extract individual cases from automated processing when moral judgment requires it. Their accountability is for choosing not to intervene when intervention is warranted. If they have authority to halt a harmful chain and choose not to exercise it, they bear responsibility for the harms that continue. If no one has effective intervention authority, that design choice triggers backward accountability for the architects who made intervention impossible.

Both backward and forward accountability must actually connect to identified humans with genuine authority and responsibility. Chains documented as terminating in committees that diffuse responsibility, organizational roles without operational authority, or historical figures without current involvement fail the Daisy Chain Principle. Accountability requires actual humans with actual authority who actually exercise moral judgment.

Complexity and Speed as Design Choices

A common objection holds that modern AI systems operate at scales and speeds that make human oversight of individual cases impossible. Millions of applications processed. Thousands of decisions per second. No human could possibly review each one. The Daisy Chain Principle responds that complexity and speed are design choices. Humans chose to create systems operating at scales and speeds that preclude individual oversight. That choice has moral implications. If the consequence is that consequential decisions affecting people’s lives occur without moral judgment mediating them, then the choice to create such systems requires justification and alternative accountability structures.

This might mean statistical monitoring that detects when chains produce patterns requiring human attention. It might mean sample-based auditing where humans review representative cases to verify chain behavior aligns with moral requirements. It might mean escalation triggers automatically routing unusual cases to human judgment. It might mean limiting automation to cases where the stakes are low enough that accountability gaps are acceptable. What it cannot mean is accepting that scale and speed excuse accountability absence. The choice to operate at such scale was a human choice. Humans remain accountable for what that choice produces.

Governance Requirements

The Daisy Chain Principle creates specific governance requirements for AI architectures involving multiple systems. Chain documentation must map every link from initiation through impact on humans. Documentation must identify what each link does, how links connect, where human oversight exists, and where chains affect humans without intervening moral judgment. Accountability termination points must identify specific humans with genuine authority at both ends of accountability. Names, not roles. Authority, not theoretical capability.

Intervention mechanisms must enable humans to halt chain operation, modify chain behavior, and extract individual cases for human review. These mechanisms must function under operational conditions, not merely exist on paper. Testing must verify that intervention can occur within timeframes meaningful for affected stakeholders. Governance review must evaluate whether proposed chains maintain moral agency connection at every point where outcomes affect humans. Chains that end in accountability voids require redesign before deployment, not remediation after harm occurs.

Most AI orchestration architectures currently deployed fail the Daisy Chain Principle. They were designed for efficiency, scale, and speed without governance attention to accountability maintenance. The organizations deploying them have created systems where consequential decisions emerge without moral judgment mediating outcomes. The Daisy Chain Principle insists that every chain must end in human moral agency, that no architecture is sophisticated enough to escape accountability, that complexity is never an excuse for the void where accountability should be. The final post in this foundational series will introduce the First Mover Authority framework, which classifies AI deployment and helps organizations identify where these requirements become most critical.