Throughout this series, we have developed a comprehensive framework for AI governance grounded in human moral agency. We established that AI lacks moral agency and always will. We distinguished AI as tool from AI as role, with the shift creating critical governance requirements. We explored the Vacancy Problem, the Derivative Principle, the Two Conditions for ethical deployment, and the Daisy Chain Principle governing accountability in complex architectures. One concept has run through these discussions without full elaboration: First Mover Authority. This final foundational post develops that concept into a four-level classification model that determines what governance requirements apply to different AI deployments.

First Mover Authority asks a simple question: who initiates action in the human-AI interaction? The answer to this question places any AI deployment at one of four levels, each requiring different governance structures, different accountability mechanisms, and different intervention capabilities. Organizations that do not track which level their AI deployments occupy cannot govern them appropriately. They apply Level 1 frameworks to Level 3 systems and wonder why their governance fails to prevent harm.

Level 1: Human First Mover

At Level 1, humans initiate and AI responds. The human types a prompt. The human requests a report. The human triggers an automation. The human queries a database. In each case, human action comes first, and AI produces output in response to that action. The human retains decision authority. The human determines what to do with AI outputs. AI augments human capability without displacing human agency from the relationship structure.

Level 1 is AI as tool. The governance requirements are those of traditional technology management: appropriate use policies specifying how AI tools should and should not be used, access controls determining who can use which tools, data handling policies protecting information entered into AI systems, quality standards ensuring AI outputs meet organizational requirements, vendor management ensuring adequate contractual protections. These requirements resemble those for other enterprise software. Existing governance bodies can manage them within established frameworks. The comprehensive AI governance requirements developed in this series do not apply at Level 1 because AI is not occupying roles; it is assisting humans who occupy roles.

Level 2: AI First Mover Within Human Architecture

At Level 2, AI initiates action within parameters that humans designed. AI produces outputs, generates recommendations, or takes actions without requiring human initiation for each instance. Humans respond by reviewing, modifying, or approving what AI created. The AI becomes the first mover, but humans retain control through review authority. A generative AI system generates complete documents from specifications; humans review and approve. An analytical AI system screens job applications and presents top candidates; humans make final selections. A process AI system routes customer inquiries to appropriate departments; humans oversee routing patterns.

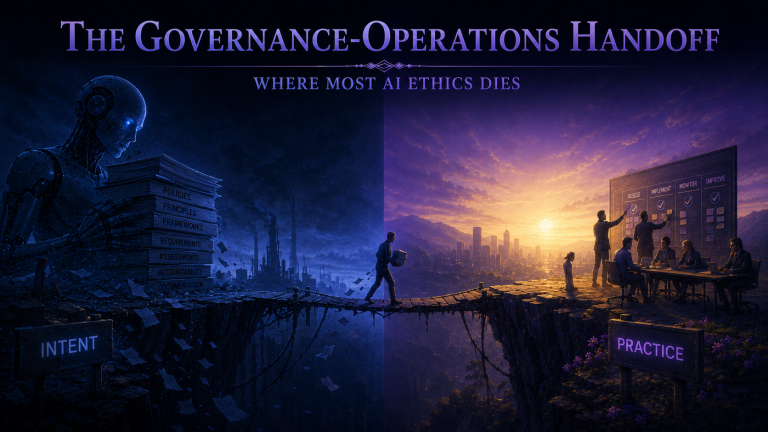

The transition from Level 1 to Level 2 is the critical governance boundary. This is where AI shifts from tool to role, where the comprehensive governance frameworks developed throughout this series become necessary. At Level 2, stakeholders may interact with AI-generated content, receive AI-determined services, or experience AI-routed processes. The Vacancy Problem emerges. The Two Conditions for ethical deployment apply. Governance must ensure structural accountability connecting AI outcomes to human moral agents and directional alignment ensuring AI serves stakeholder flourishing. Level 2 deployments require documented accountability architecture, designed intervention mechanisms, accessible human touchpoints, and ongoing assessment of relational impact.

Level 3: AI Orchestrating AI

At Level 3, multiple AI systems coordinate their operations, with one AI’s output becoming another’s input. Humans designed the orchestration architecture, but individual orchestrated decisions may occur without human review. The hiring chain described in our discussion of the Daisy Chain Principle operates at Level 3: parsing AI feeds screening AI feeds ranking AI feeds scheduling AI, with humans overseeing patterns but not individual cases. Level 3 creates cascading risks where problems in one system propagate through coordinated systems and produces emergent behaviors that individual system reviews might not detect.

Level 3 requires all Level 2 governance plus additional orchestration-specific requirements. The Daisy Chain Principle becomes particularly critical: accountability must trace through every link in coordinated chains to human moral agents at both backward and forward termination points. Orchestration architecture documentation must map how systems interact, where emergent behaviors might arise, and where human oversight connects to chain operation. Cascading risk assessment must evaluate what happens when problems propagate through coordinated systems. Intervention mechanisms must enable halting not only individual systems but entire orchestrations when necessary. End-to-end monitoring must detect orchestration-level problems that individual system monitoring might miss.

Level 4: AI Self-Modification

At Level 4, AI modifies its own operational parameters based on experience or environmental feedback. Humans set initial objectives and constraints, but AI adjusts how it pursues those objectives without human approval for each adjustment. A trading AI that modifies its strategies based on market performance operates at Level 4. A customer service AI that adjusts its responses based on satisfaction metrics operates at Level 4. These systems evolve their behavior over time in ways that architects cannot fully predict and may not fully understand.

Level 4 represents the highest governance challenge in the framework. Self-modification can produce gradual drift where cumulative parameter changes produce substantially different behavior than architects intended, even when individual modifications were small and appropriate. Governance requires explicit boundaries on what modifications are permissible, continuous monitoring that detects parameter changes as they occur, constraints preventing modification beyond acceptable ranges, and intervention protocols that activate when self-modification produces unacceptable outcomes. Human authority to roll back modifications must be maintained. Periodic review must assess whether evolution trajectory serves intended purposes or has drifted toward inversion.

Governance Implications of the Four Levels

The Four-Level Model enables governance professionals to match governance requirements to deployment characteristics. Level 1 requires traditional technology governance. Levels 2 through 4 require the comprehensive AI governance frameworks this series has developed, with requirements intensifying at each level. Organizations must classify their AI deployments accurately and apply level-appropriate governance. Classification must be based on actual operational behavior, not vendor marketing or stated intent. An AI system marketed as decision support but effectively making decisions operates at Level 2 regardless of what vendors claim.

Organizations must also monitor for level transitions. AI deployments can drift from one level to another as capabilities evolve, usage patterns change, or operational practices adapt. A Level 1 tool can evolve toward Level 2 as users delegate more decision authority to it. A Level 2 deployment can become Level 3 when organizations connect it to other AI systems. Level transitions trigger governance transitions; organizations must detect when transitions occur and adjust governance accordingly.

AI Role Inventories should document First Mover Authority level for every AI deployment at Level 2 or above. Inventory management should include periodic level verification, ensuring documented classifications remain accurate as systems evolve. Governance review processes should require level assessment for new deployments and level re-assessment when significant operational changes occur.

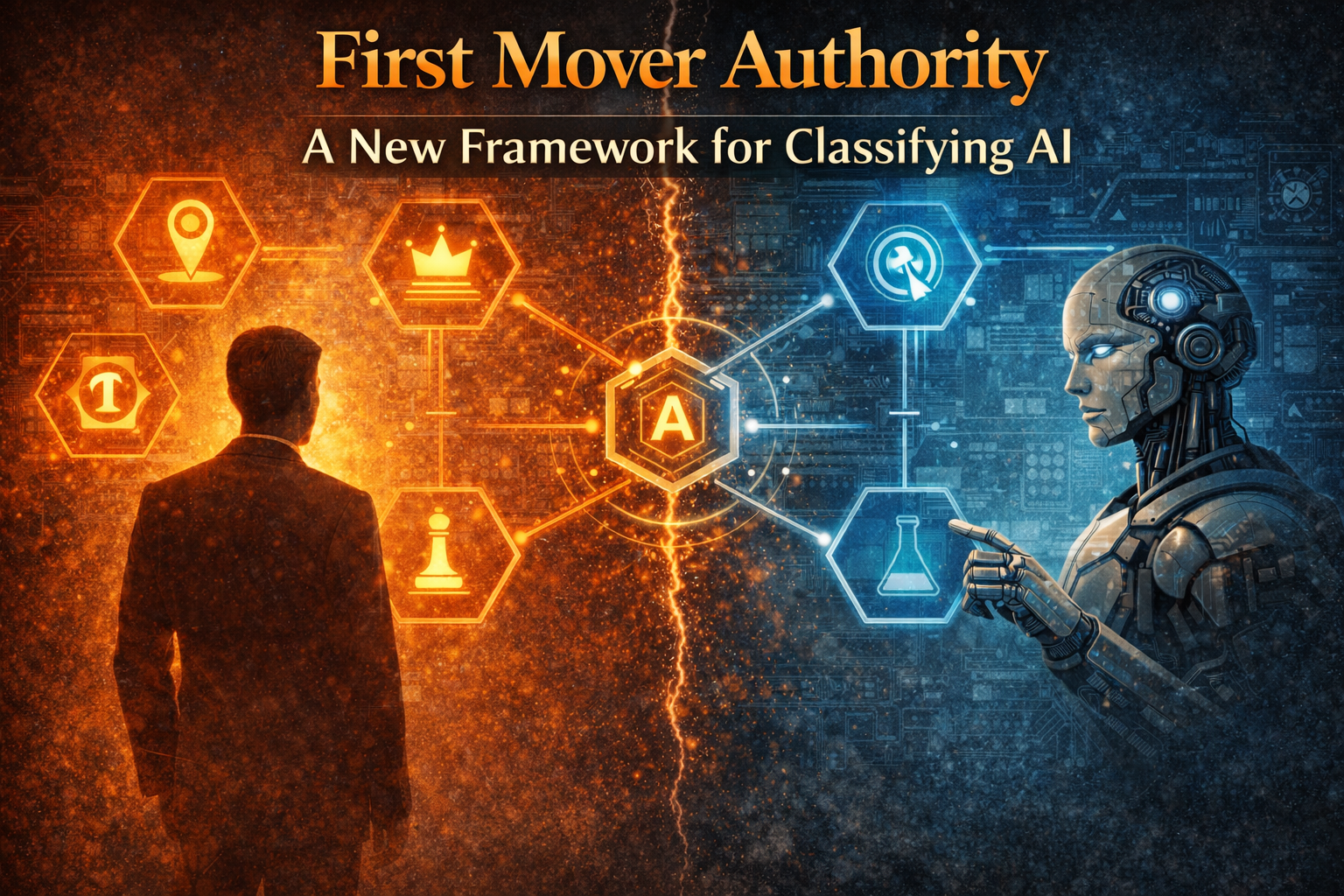

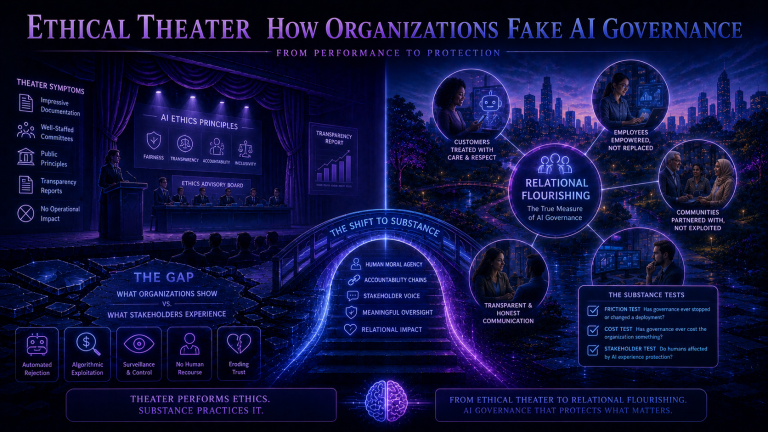

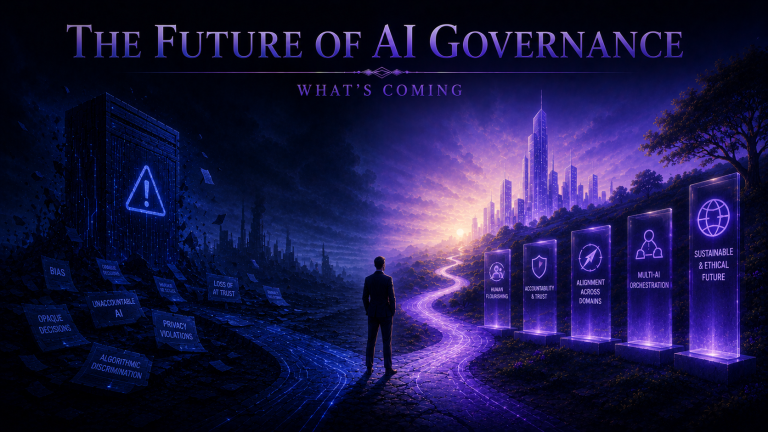

This post concludes the foundational series establishing the conceptual framework for ethical AI governance. We have moved from critique of control-based paradigms through philosophical foundations to practical classification methodology. The concepts developed here provide the vocabulary and analytical tools governance professionals need to evaluate AI deployment with precision. Subsequent posts will apply these foundations to specific domains, industries, and governance challenges, building on the shared understanding this series has established. Organizations that master these foundations position themselves to govern AI in ways that genuinely serve stakeholder flourishing rather than producing the governance theater that control-based paradigms inevitably create.